Social Media Platforms’ Self-Regulation Era is Over

WASHINGTON – Rep. Cathy McMorris Rogers, R-Wash., said at a congressional hearing today that as a parent of three school-aged children, Facebook, YouTube and Twitter are her “biggest fear.”

After spikes in teenage suicides in her community, everyone she reached out to “[raised] the alarm about social media,” Rogers said, pointing to research linking it to the rates of depression, self-harm, suicides and attempts rising over 60% between 2011 and 2018.

“You’ve abused your power to manipulate and harm our children,” she said as she scolded the CEOs of all three online platforms during a House Committee on Energy & Commerce hearing.

Teens using their devices for more than five hours a day, she added, were 66% more likely to have a suicide-related incident than one-hour users. But the harm resulting from lack of content moderation and accountability is not limited to children.

Lawmakers pointed out that the tactics employed by the companies, whether by algorithms or content placement, not only result in a threatening environment for the mental and physical health of children but for society as a whole.

The CEOs came under fire by both Republican and Democratic lawmakers gauging the extent of responsibility they have for protecting their users from harmful content, the spread of disinformation and misinformation, and particularly in facilitating the rise of extremism that has led to events like the deadly storming of the U.S. Capitol in January.

Both Rep. Frank Pallone, D-N.J., and Rep. Jan Schakowsky, D-Ill., said the moment for “self-regulation” has passed.

The question is what to do next, especially as the companies appear to “just shrug off billion-dollar fines,” said Rep. Mike Doyle, D-Pa.

“You are picking engagement and profit over the health and safety of your users, our nation and our democracy,” Doyle added. “We will legislate to stop this.”

Despite continuous policy changes and promises to moderate their own content, each platform has “failed to protect your users and the world from the worst consequences of your creation,” Doyle said.

He also charged the companies with allowing the spread of misinformation surrounding the efficacy and safety of the COVID-19 vaccine. According to Pallone, this has led to 30% of Americans either refusing or at least unsure about taking the vaccine.

The underlying problem lies within these companies’ business models that “exploit the human’s brain preference,” Pallone said.

“The more outrageous and extremist the content,” he explained, the more user engagement they get, which leads to more money in ad dollars for the platforms.

“They are not mere bystanders,” he added.

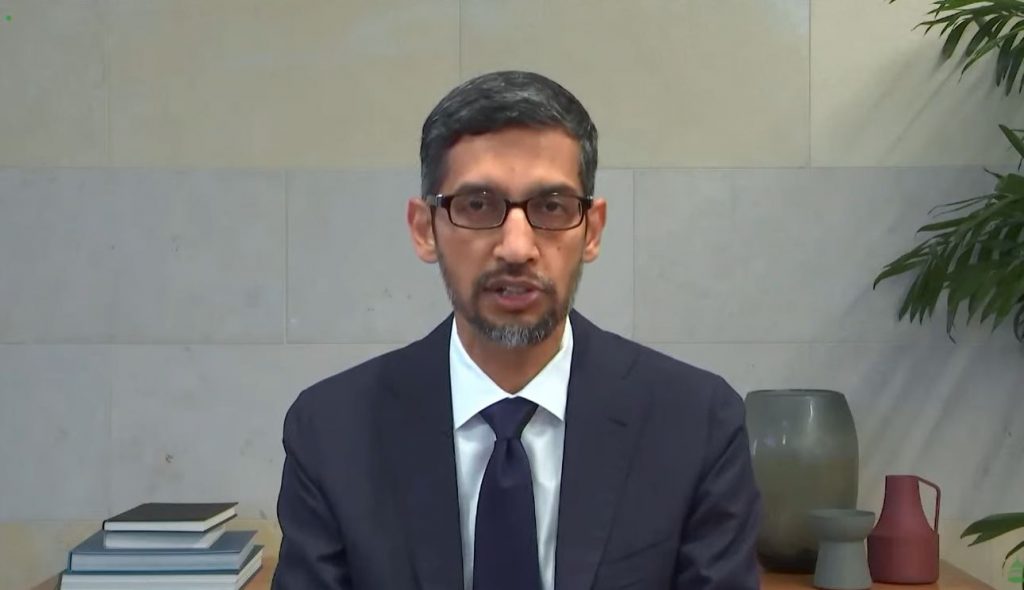

Facebook CEO Mark Zuckerberg countered that the people who stormed the Capitol, and former President Donald Trump who told them to, should be the ones held accountable. Neither Zuckerberg nor Google CEO Sundar Pichai answered whether they bear some of this responsibility. Twitter CEO Jack Dorsey, however, admitted his company has some responsibility but pointed out the “broader ecosystem” in which they operate needs to be accounted for.

Pichai said that Google has been committed to “[making] things better” for all communities, pointing out how the company helped businesses and publishers of content on their platform make $426 billion last year. However, he added, it is very difficult to combat misinformation while attempting to provide open access to their products and freedom of expression.

Every minute, more than 500 hours of video are uploaded to YouTube and 15% of all daily searches on Google are entirely new. On the heels of January’s riot, the tech giant began to increase its moderation by removing content that incited violence on YouTube and apps on their store and banned ads that made any reference to the insurrection and 2020 election. To combat COVID-related disinformation, Pichai also said that they removed 850,000 YouTube videos and blocked close to 100 million ads.

However, if regulations “force” every business to act the same, Dorsey said it would lead to a lack of innovation, consumer choice and “diminishes free marketplace ideas.” What needs to be done, he explained, is true transparency across all platforms.

These “minor” self-imposed policy changes and “empty promises” mean nothing if the problem still exists, Pallone said.